How Flashka turned one question into a 20% lift

Flashka & userjourneys.ai

20%

More Cards Studied

33%

Longer Sessions

0

Meetings Required

The Flashka team celebrating #1 on the App Store in Education, above Duolingo and ChatGPT

At a glance:

- Agent autonomously identified a UX pattern causing of mid-session dropoffs

- Designed and launched an A/B test in a single chat conversation

- 20% increase in cards studied per user per day

- Zero researcher time: from insight to running experiment in minutes

Flashka is an AI-powered flashcard app that turns any study material (lecture slides, textbooks, YouTube videos) into interactive quiz decks. Since launching, the app has grown to 1.3 million sign-ups, mostly students preparing for exams across Europe.

Simone De Marchi, Flashka's CPO, co-founded the company because he saw a gap: students were spending hours making flashcards instead of actually studying. Flashka generates them automatically, then uses spaced repetition and quizzes to help the material stick.

The product works. Students keep coming back. But Simone wanted to understand whether they were studying as deeply as they could be.

“What I love is that I can just ask a question and it actually does something about it. It's not a dashboard I have to go check. It sees what I'd miss and then helps me fix it.”

Simone De Marchi

A question, not a hypothesis

Simone didn't open userjourneys.ai with a theory. He opened a chat.

“How are quiz completion rates looking lately?”

The agent segmented three months of quiz sessions, 491,000 in total, by how many questions each student answered before stopping. The headline stat looked fine: ~26 questions per session on average.

But the shape of the data told a different story.

“The pattern isn't smooth decay,” the agent reported. “You get these pronounced spikes right at 10, 20, and 30 answered, almost like stair steps. That's usually a sign something in the product is creating natural stopping points rather than users randomly getting bored.”

The data was striking. At question 10, a sudden 10.7% cliff. At question 20, 12.8%. At question 40, 11.8%. Between those milestones, dropoff was gradual and natural. At the milestones themselves, students were leaving in waves.

% of quiz sessions ending at each question (Q1–Q80)

Every 10th question shows a dramatic spike — the checkpoint screen turns a pause into an exit.

“What's driving that? Feels too tidy to be random.”

When Simone pushed back, the agent connected the pattern to a specific product feature: every 10 questions, Flashka showed a “Great progress!” checkpoint summary screen. It was designed to celebrate the student's effort. Instead, it was giving them permission to stop.

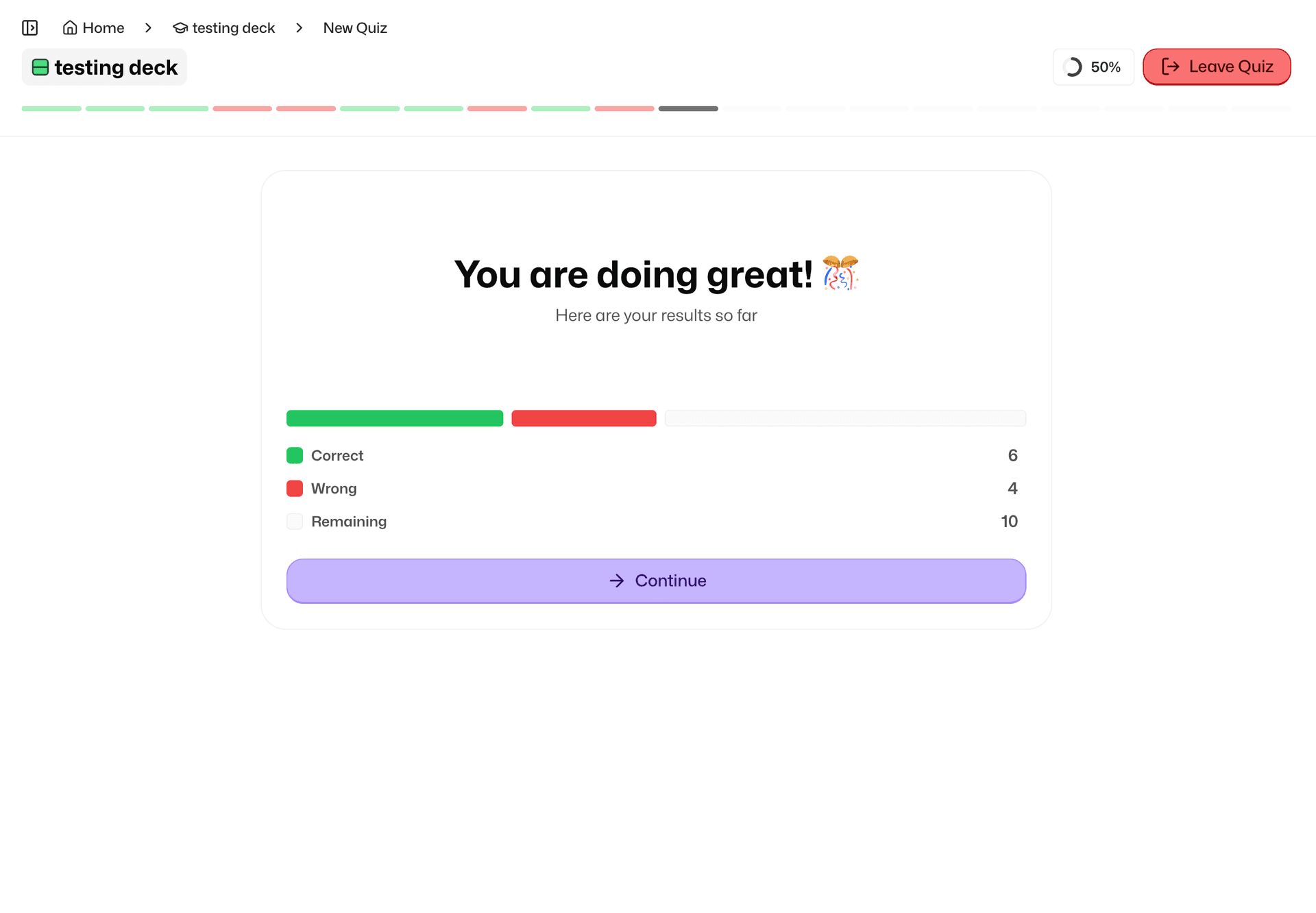

The checkpoint screen that appeared every 10 questions

“Two things are probably happening at once,” the agent explained. “It celebrates progress (nice), but it also creates a clean mini-finish line where it's easy to tap out. That's exactly the kind of pattern you see when a pause maps to round numbers.”

The insight wasn't that students were abandoning their quizzes. Most were completing their chosen length. It was that the checkpoint screen was anchoring students to shorter sessions. The pause became an exit.

From chat to live experiment

The agent didn't stop at the insight. It proposed a test: “The straightforward test is a 50/50 split: same flow, but half of users never see that pause, and we measure questions answered + cards studied. Want me to launch it as quiz-no-checkpoint?”

Simone's response: “Yeah let's do it.”

Within minutes, the experiment was live. Two arms: control kept the checkpoint screen, the variant removed it entirely. Exposure balanced at roughly 50/50 across 7,264 students in the first week.

No research brief. No prioritization meeting. No sprint planning. A question in a chat became a running experiment before Simone finished his coffee.

The results

Students in the no-checkpoint group studied more. Median questions answered per user rose 33%, and daily cards studied trended toward a 20% lift as the experiment matured.

Students didn't need more sessions. They just went deeper in each one. Without the checkpoint interrupting their flow, they kept studying.

“It was a feature we built to make students feel good. Turns out it was making them feel done.”

Simone De Marchi

What made this different

Simone's team didn't have a data analyst assigned to quiz flow optimization. They didn't have a backlog ticket about checkpoint screens. The insight came from an open-ended question to an AI agent that had access to their product data.

The agent did what would normally require an analyst pulling session data, a product manager interpreting the pattern, a designer proposing the change, and an engineer setting up the test. It happened in one conversation.

For Flashka, this is now how product decisions get made. Simone and his team use the userjourneys.ai agent daily: asking questions, tracking shipped features, running experiments. The checkpoint discovery wasn't a one-off. It was a Tuesday.

From insight to action

The pattern Flashka discovered isn't unique to flashcard apps. Every product has well-intentioned UX that quietly works against its goals. A confirmation dialog that becomes a cancel button, a summary screen that becomes an exit ramp, a celebration that becomes a stopping point.

The difference is whether you catch it. And how fast you can act on it.

Flashka went from “how are quiz completion rates looking?” to a live experiment with measurable uplift, without writing a ticket, scheduling a meeting, or waiting for a quarterly review.

That's what happens when an AI agent has access to your product data and the ability to act on what it finds.